Ah yes, ChatGPT. The latest and greatest AI assistant that seems to have taken the internet by storm. After crossing the one-million-user mark less than a week from its launch and then going even more viral, ChatGPT has absolutely flooded social media. People from all over the world are sharing screenshots of the ways their new AI pal has helped them: from authors using it to get past writer’s block, to software engineers correcting code, teachers making lesson plans, and even therapists using it to help treat their patients… Naturally, our next question was: what about medical students using ChatGPT to study for the MCAT?

AI in medicine is an incredibly complex subject. Let’s say you were to rely on an AI to create a treatment plan for a patient and the AI is wrong. That could lead to a terrible scenario for everyone involved. AI in medical education however is without a doubt lower stakes. This is why you might feel comfortable using a tool like ChatGPT to help you study for the MCAT or other exams later in medical school, even if you wouldn’t use AI in a clinical setting. But even with less at risk comparatively, can you trust ChatGPT with your MCAT score? That’s exactly the question we set out to answer in this post.

Our experiment was simple: 1) Grab some MCAT related student questions from Reddit, 2) run the questions through ChatGPT, and 3) have our MCAT content team evaluate the responses for clarity and (most importantly) correctness.

The results were… somewhat surprising.

Exhibit A: “Oh my god this might actually work.”

Based on our prior experience trying to get AIs to answer MCAT questions, you can imagine our excitement when seeing ChatGPT’s response here. While this answer doesn’t perfectly address u/team_mitochondria’s question, it is by far the best AI response to an MCAT question we’ve ever seen. The response provides factually correct information, touches on relevant topics, and therefore generally gets a thumbs up from us.

Exhibit B: “Holy Sh*t this actually works”

This is the point at which our team got really excited. ChatGPT managed to not only provide factually correct information in response to this MCAT question, but it actually provided an insightful and genuinely helpful reply. If ChatGPT was able to consistently turn out this caliber of response to MCAT question help, we would probably recommend it as a supplementary resource to the actual AAMC answer explanations! BUT that brings us to:

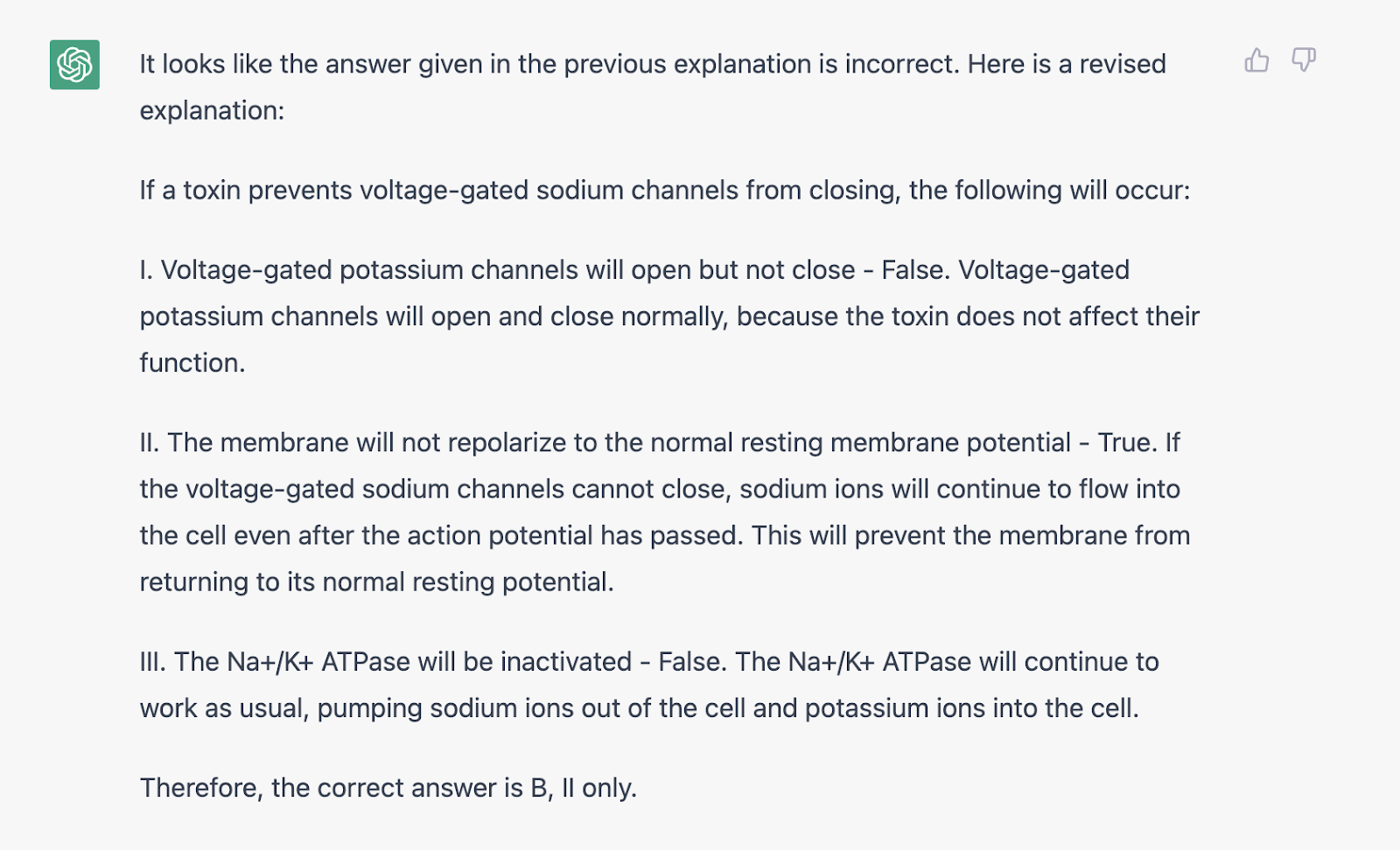

Exhibit C: “Going Downhill - Part 1”

Well. It was good while it lasted right? u/TruthandFalsehood is definitely confused here, but this is a good example of where AIs like ChatGPT can sometimes do more harm than good when it comes to MCAT help. In this scenario, just like the disclaimer on the website says can occasionally happen, ChatGPT is actually factually incorrect in its response. The answer “C: I and II Only” IS the correct answer, but ChatGPT has just reinforced the student’s confusion over the matter by validating the concern that the exam may be incorrect. Whether by design or because we’re still smarter than AIs, ChatGPT is ONLY considering the toxin when addressing “I” even though there are additional factors to pay attention to, as discussed in the real answer explanation. And what’s more, ChatGPT doesn’t really address the student’s confusion at all in its response.

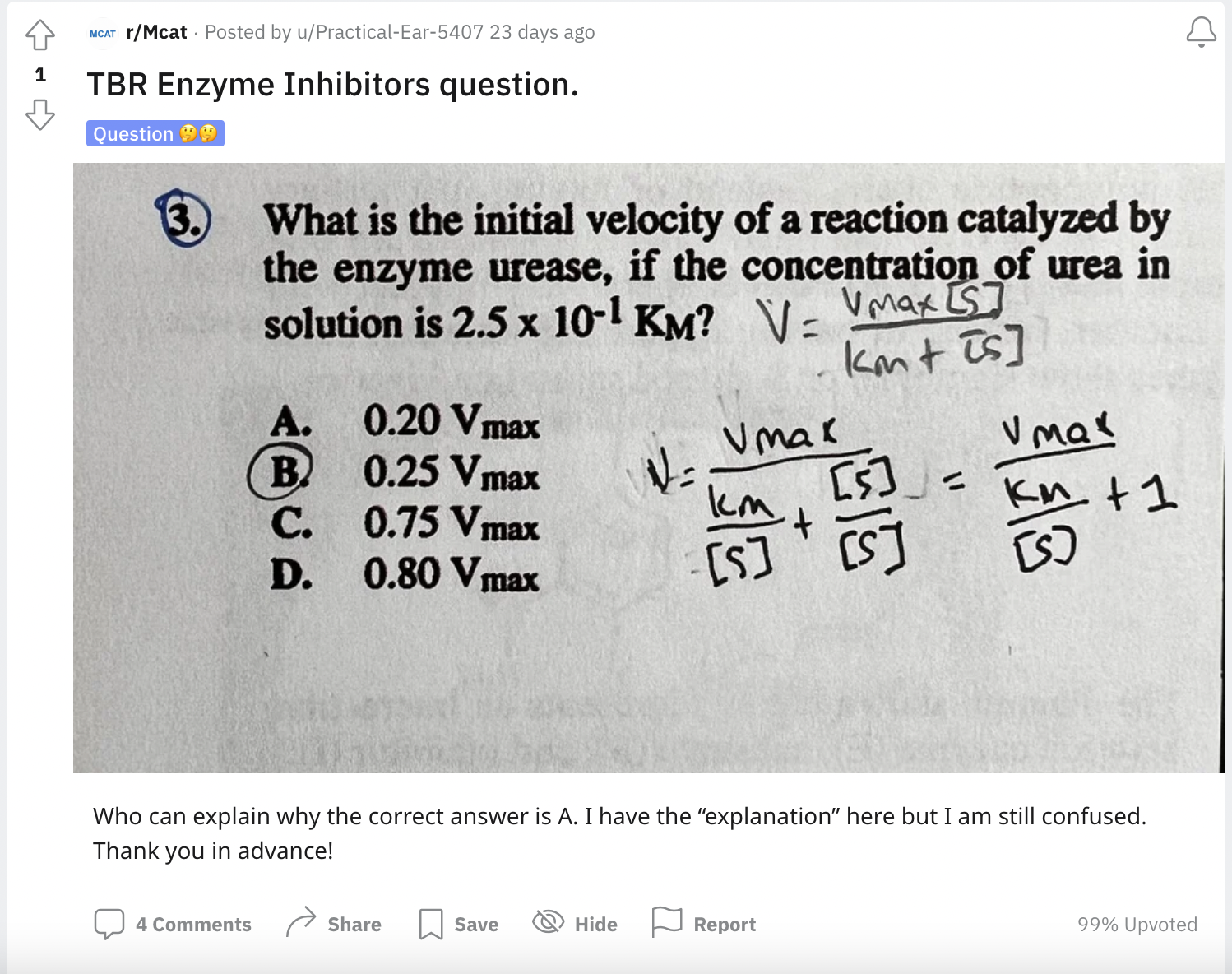

Exhibit D: “Going Downhill - Part 2”

To be honest, this one sort of made us laugh. ChatGPT seems to REALLY not want to think in abstract terms—that’s a hilariously long list of parameters it wants to know before it even approaches the problem. Bad AI!

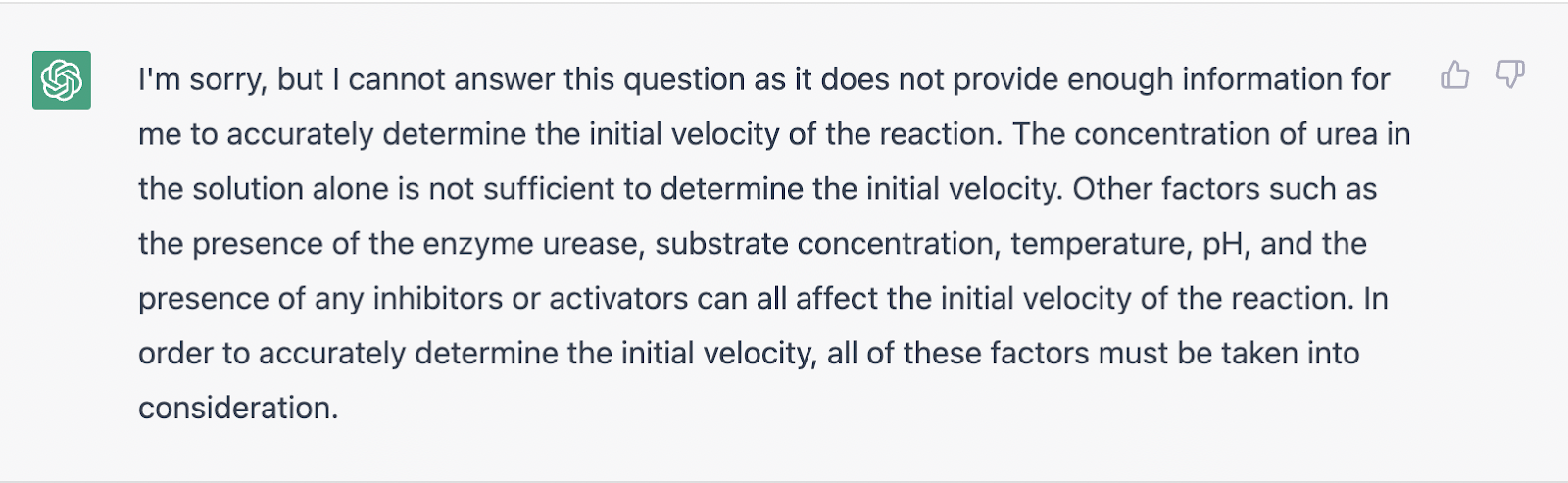

Exhibit E: “But maybe there’s hope?”

To the credit of ChatGPT, in this one it is kind of hard to know what’s going on here without the question. That said, most of what ChatGPT is saying is correct. And bonus points for a very polite sign off. Good AI!

In conclusion, it seems like ChatGPT is still a little ways away from being a reliable answer explanation tool to help you while studying for the MCAT. While it seems like for some more straightforward questions it can provide positive and helpful insight, if you’re really confused about something, we recommend you look elsewhere for clarity. The problem with using an AI such as ChatGPT to help you study for the MCAT remains that it can be incorrect in its responses but still come across as convincing. To the confused student, this can without a doubt cause some serious problems. All in all, we’re optimistic for the future, but in the present, we recommend sticking to more tried and true methods of studying (*cough, cough* like Sketchy) and steering away from AI. But definitely feel free to have it write you bedtime stories.